The Hidden Cost of Exporting Data

This post was written by Kit Friend, Agile Coach and EMEA Atlassian Partnership Lead at Accenture – all views are (currently, despite his best efforts) his own.

“Great, how do I export this to a spreadsheet?”

…words to strike fear into the heart of every tooling admin or agile coach! But should we really be so uptight? Surely it can’t hurt for people to take data from your tool of choice and play with it in a tool they’re more comfortable with…

The curious case of the missing fidelity

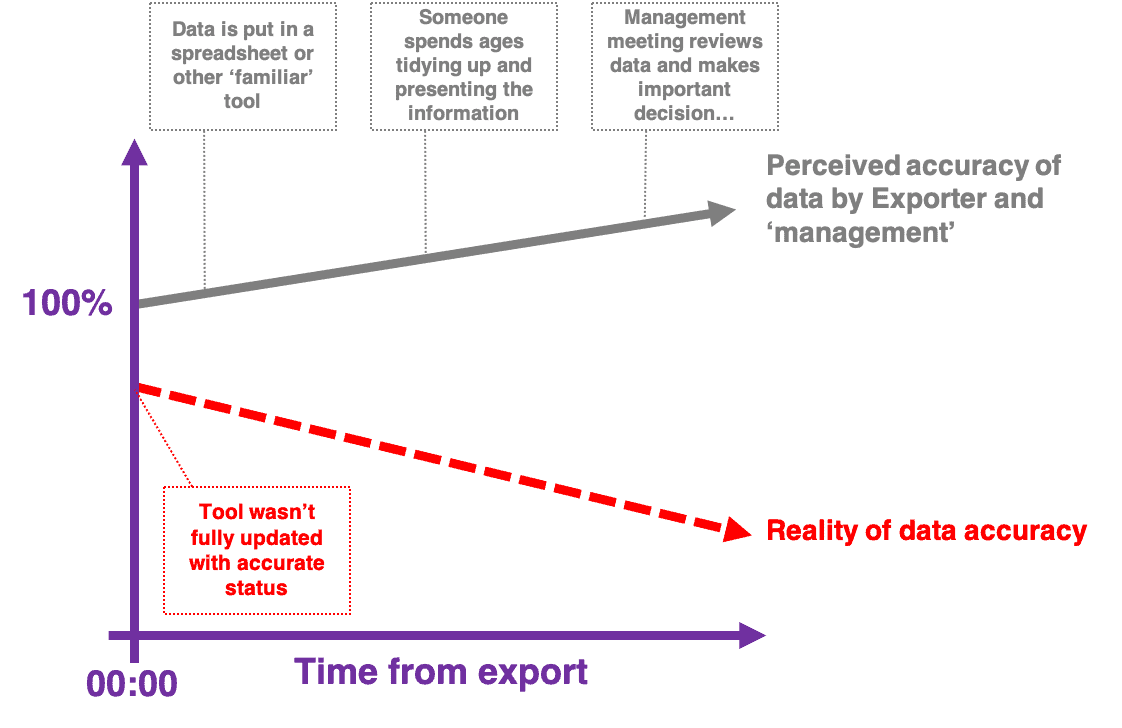

We all know that data is only accurate at the point it’s recorded, but something odd happens when this principle is applied in business. As effort is poured into exporting and manipulating this data, we habitually assume this processing adds both accuracy and value to the data. It is of course true that a raw data set can lack value. I’m a huge fan of Information is Beautiful — skilled visualization and organization can bring huge clarity to noise. But the real power comes when data can be processed and meaningfully visualized in real-time.

In a typical business context, and particularly when examining ‘delivery’ type data, this doesn’t happen. Instead we get something like the following:

No one in this scenario is intentionally misleading anyone else (even the team or system which didn’t keep the source data updated), but all the key figures in the Perceived accuracy line of activity willingingly engage in what I like to call ‘the beautiful fiction’ of the data they’re looking at. If anything, this is made worse by the act of repeatedly polishing the data — whether in slides, fancy business intelligence tools, or verbal narratives.

Manual data handling comes at a cost

“Eesh… well it would be great to invest in a tool that does that properly but I’ll never get the budget approved — you’ll just have to do it manually”

People are (mostly) great, but we’re just not that efficient when it comes to data processing. Unfortunately, in most organizations the cost of buying a tool to do something properly is very clear, but the cost of hacking together a bandaid workaround is a lot more murky.

When we’re asked to present data for decisions, it’s inevitable that those making the decisions want something both informative and pretty to look at. For many of us working in delivery, this ‘pretty’ element (also known as, “suitable for the executives”, “consumable”, “appealing”, but it boils down to aesthetics) presents a significant challenge.

The ‘quickest’ fix has normally been to grind the data into a particularly bespoke format of slides. For one particularly memorable presentation on this topic, I calculated the cost of presenting a consumable sprint report to management versus the cost of using Screenful (which automates the process, and produces very slick output). The results were pretty horrifying. Based on the assumption that creating a sufficiently appealing set of sprint report metrics every two weeks for a year would take an averagely skilled person one to two hours of work, over the course of the year the automated route was about 97% cheaper. On top of this, the human route gave a new set of report metrics every two weeks; the automated option gave a refreshed view every 15 minutes.

Is there a fix?

So why am I writing this blog on unito.io — not a metrics or reporting tool? Whilst I’m always a fan of agnosticism and piloting, Unito does offer a fantastic fix for one important problem teams need to consider to get away from the exporting: everyone always wants to work in their tool. It is sometimes possible to push for consolidation, but let’s face it, these things take time and there’s always outliers. A typical organization will use a variety of tools. A key driver behind the export requests is to try and provide visibility between everyone’s preferred app.

Whether you choose to use Unito or to go a different route, the challenge is the same: you need to find ways to link up disparate teams with real data, not immediately inaccurate exports. When you can solve that problem, the magic will follow.